Adaptrade Software Newsletter Article

Deep Neural Networks for Price Prediction, Part 2

In part 1 of this series of articles, I introduced the use of deep neural networks (DNNs) for predicting market prices for trading. I illustrated how a forthcoming version of my trading strategy generator, Adaptrade Builder can be used to evolve a set of inputs for a DNN and built populations of DNNs for four different symbols.

The results were compelling. In particular, for each symbol, the out-of-sample performance was excellent without any special treatment or effort. For example, no rebuilds were necessary, and the out-of-sample performance was consistent throughout the population. However, the results were limited in that the DNN was evaluated by itself rather than as part of a trading strategy, which is the ultimate goal of this new capability. In this article, I'll take the next step and show how these artificial neural networks can be used as part of a trading strategy.

Specifically, I want to answer the following questions:

- Does positive performance based on the metrics presented in part 1 translate into positive strategy trading results?

- Will the genetic programming process (build algorithm) be able to find strategy logic that complements the price prediction DNN and takes full advantage of it?

- Does the DNN provide substantial benefit over what can be achieved with the current features and capabilities of Adaptrade Builder?

The TLDR of these questions is a resounding "yes".

Intraday Price Prediction

To answer these questions, I started with 5-minute bars of the E-mini S&P 500 futures (symbol ES), day session only, consisting of 59,174 bars of data from 11/1/2019 to 9/15/2022. I set the training segment to the first 80% of the data (through 2/17/2022). The test and validation segments were both set to 10% of the remaining data, and since neither of these segments were used in the training process, they were both fully out-of-sample.

In part 1, I explained that longer prediction windows are more accurate. With this in mind, I set the prediction window to 25 bars. For context, this implies the DNN is predicting the price 2 hours and 5 minutes ahead, whereas the day session is 6 hours and 45 minutes long. For the first 4 hours and 40 minutes, the prediction will be for the same session. Within 2 hours and 5 minutes of the close, the prediction will be for the following day.

The neural network build settings are shown below in Fig. 1. In addition to minimizing the mean squared error, I also chose to minimize the complexity of the neural network inputs (with a small weighting) in order to keep the inputs from becoming too complex. To bias the inputs and weights of the network towards trading performance, I added a condition (constraint) for the percentage of correct direction predictions.

Figure 1. Settings used to build a population of DNNs for 5-minute bars of ES.

The settings for the neuroevolutionary (genetic) training algorithm consisted of a population size of 250 with 50 generations, a crossover percentage of 75%, and a mutation percentage of 5%. Following the genetic training, I fine tuned the weights using the Adam method (250 epochs, as shown in the figure).

The settings for the evolution of the population of DNNs are made on the GP Settings menu in Builder, the same as when evolving a population of strategies. In this case, I selected a small population of 32 — chosen to be a multiple of the number of CPU cores (16) — with just 10 generations.

As with the examples in part 1, the evolution of the population proceeded smoothly, with the fitness on both the training and test segments increasing fairly steadily over the 10 generations of the build process (not shown), indicating a good quality build.

Following the conclusion of the build process, the top network ended up with the following single input:

Input 1: Momentum(C, 100)

The momentum indicator was guaranteed to be one of the inputs because I included it in each network using the "Include" option on the Indicators menu. I did this because momentum with a look-back length of N is defined as the price change over the past N bars, and the neural network is trained to predict price changes, which are then converted to price predictions.

Some of the build results are shown below for each segment. As with the examples in part 1, some of the out-of-sample results (in this case, MSE, Net Points/Bar, and Dir Correct) are as good or better than those on the training segment.

| Metric | Training | Test | Validation |

|---|---|---|---|

| MSE | 0.0746 | 0.0746 | 0.0745 |

| Net Points | 11362 | 2337 | 2311 |

| Net Points/Bar | 0.240 | 0.395 | 0.395 |

| Dir Correct (Pct) | 59.2% | 62.4% | 62.3% |

| Ave Error (Pct) | 0.46% | 0.62% | 0.57% |

A Simple Strategy Test

Now that we have a DNN for 5-minute bars of the ES, what's a simple way to test whether it will be useful for trading? Because the DNN was trained to predict the price 25 bars ahead, the simplest trading strategy based on it would be to buy at the market if the network predicts the price will be higher (or sell short if it predicts the market will be lower) and exit at market 25 bars later.

Fortunately, it's easy enough to build such a strategy in Adaptrade Builder. It only requires a population size of 1 with zero generations. On the DNN Settings window (Fig. 1), I checked the box "Include DNN in strategies" and selected the DNN shown above, which I had previously saved from the Build Results tables. I then removed all the indicators from the Indicators list so that no entry logic other than for the DNN would be included in the strategy. I also removed all orders from the Orders list except for the market entry order and the order to exit after N bars. Lastly, on the Parameter Ranges window, I set the range for "Exit-After Number of Bars" to 25 to 25, which guaranteed that the selected range would be 25 bars.

When a DNN is selected to be included in strategies, Builder includes an additional entry condition that must be met in order to place the entry order. The long entry condition is that the predicted price minus the current price must be greater than the minimum price change (in points) specified on the DNN Settings window (see Fig. 1, bottom of image). Similarly, for a short trade, the current price minus the predicted price must be greater than the minimum price change. For this simple test, I set the minimum price change to zero. Also, to make the results more realistic and comparable to other results I'll present shortly, I set the trading costs to $10 per round turn and used a fixed position size of one contract per trade.

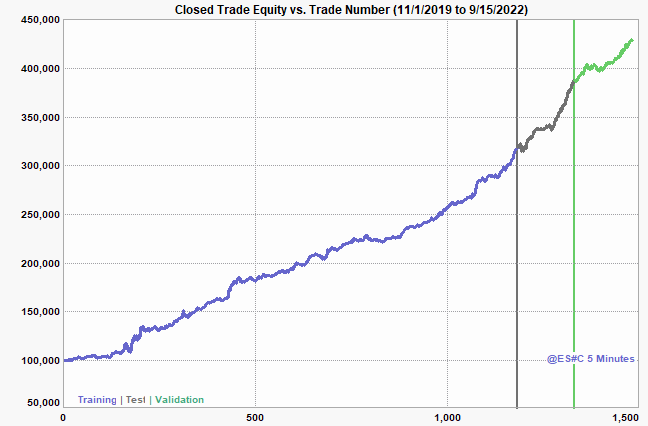

The equity curve resulting from running this strategy on the same data used to build the DNN is shown below in Fig. 2. In Table 2, I've listed several performance metrics for the different segments. Since this strategy uses the same data as used to build the DNN, both the test and validation segments are out-of-sample here as well.

Figure 2. Equity curve generated by a simple strategy using a DNN on 5-minute bars of ES with one contract per trade and $10 per round turn deducted for costs.

| Metric | Training | Test | Validation |

|---|---|---|---|

| Net Profit | $218,598 | $68,308 | $42,250 |

| No. Trades | 1184 | 148 | 150 |

| Ave Trade | $185 | $462 | $282 |

| Pct Wins | 55.2% | 62.2% | 58.0% |

| Prof Factor | 1.82 | 2.14 | 1.99 |

| Drawdown | $11,288 | $10,668 | $8,658 |

This simple strategy test demonstrates that the positive metrics shown in Table 1 for the DNN successfully translate into trading strategy results. In fact, I would argue that even this simple strategy, which required no optimization (aside, of course, from the training of the DNN itself), is a fairly good strategy, with a solid profit factor, decent average trade size, good winning percentage, and reasonable drawdown. Notice that the winning percentage is close to the percentage of correct directional predictions for the DNN, as should be expected. They're not the same because the percentage of correct directional predictions is over all bars of data, whereas the winning percentage of the strategy is only for the bars where a trade was taken.

Evolving a Strategy with a DNN

Simply following the predictions of the DNN produces decent results, so can the evolutionary build process of Builder improve on this? To find out, I started with the same data as above but with more typical build settings. In particular, I set the population size to 300 with 100 generations (no build failure rules). The only indicators I removed from the Indicators list were Trix and the volume-based indicators, which is just a matter of personal preference. The only orders I removed from the Order list were the end-of-day and end-of-week order types. I left the other settings at their defaults except for the build metrics. For the metrics, I added complexity as an objective with a small weight. I set the build conditions (metric constraints) based on the next example, discussed below, and used the same conditions here. The next example will be the same as this one except that it has no DNN. I used the same metrics here so I could compare the results more directly. All other settings were the same as in the first example.

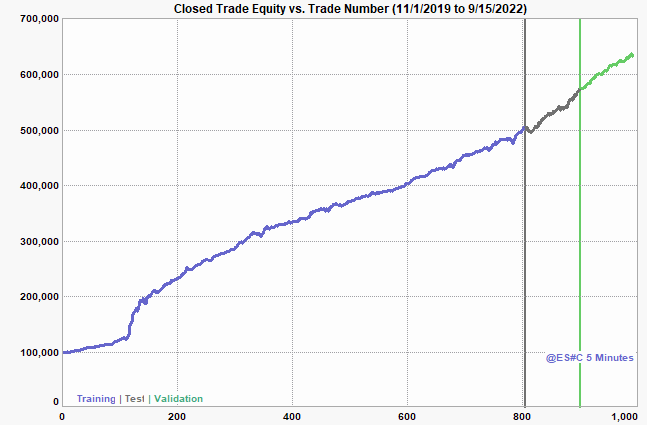

The results of this build are shown below in Fig. 3 and Table 3. No rebuilds were made, no adjustments to metrics or any settings were required, and I simply took the top strategy based on the training fitness. As can be seen, the results are substantially better than those of the simple strategy above (Fig. 2, Table 2): higher net profit, average trade, percent winners, and profit factor. Notably, the improvements are not just in the training segment but in the out-of-sample segments. Clearly, the build process was able to take advantage of the DNN and come up with something better than the unoptimized strategy presented above.

Figure 3. Equity curve generated by evolving a strategy using a DNN on 5-minute bars of ES with one contract per trade and $10 per round turn deducted for costs.

| Metric | Training | Test | Validation |

|---|---|---|---|

| Net Profit | $404,325 | $72,940 | $58,038 |

| No. Trades | 805 | 96 | 90 |

| Ave Trade | $502 | $760 | $645 |

| Pct Wins | 66.2% | 68.8% | 64.4% |

| Prof Factor | 2.91 | 2.43 | 3.13 |

| Drawdown | $11,270 | $12,928 | $5,645 |

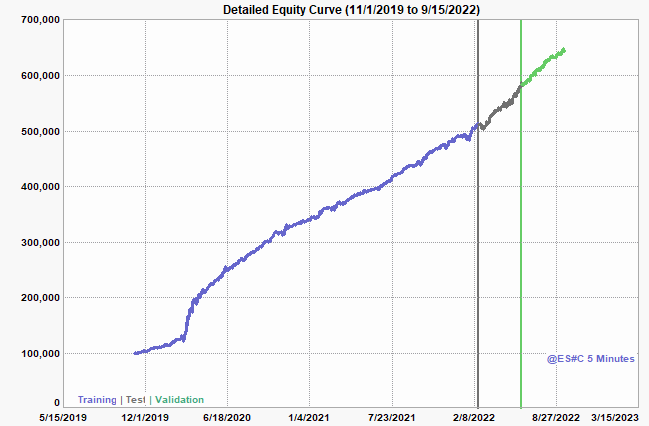

Nonetheless, an astute trader might question if the smooth, straight equity curve is hiding large intra-trade drawdowns that don't show up on a chart of closed-trade equity. In such cases, the maximum MAE (maximum adverse excursion) will be much larger than the largest loss, representing a large intra-trade drawdown that recovered prior to the trade's exit. That would certainly be a red flag. Fortunately, that is not the case here, where the Max MAE is -$6825 and the largest loss is -$6588. This is also reflected in the so-called detailed equity curve, shown below in Fig. 4, which plots the bar-by-bar equity against the date. As can be seen in the figure, the shape of the detailed equity curve is essentially the same as the closed-trade equity curve of Fig. 3.

Figure 4. Detailed equity curve for the evolved strategy shown in Fig.3.

To reassure skeptics (and myself) that the results are legitimate and not too good to be real, I ran two tests to make sure only the training data were being used in training the DNN. If, by some error, data from the test and/or validation segments were used in training, it would invalidate the results shown above. I first examined the training batches — recall that each batch consists of 32 randomly selected training samples — to make sure only training samples were contained in each batch. Then, as a final test, I copied the price data file and erased the test and validation segments so that it only contained the training data. I then reran the DNN build process on this modified data file with the training segment set to 100%. After obtaining the trained DNN, I recreated the simple strategy of Fig. 2 using the complete ES data file. The results on the test and validation segments were just as good as shown above.

Is it the DNN that makes the difference?

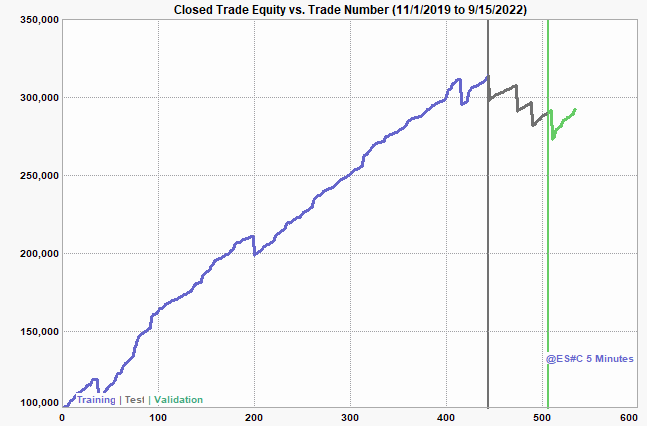

One might resonably ask "Ok, but how do I know I couldn't get just as good results without using a deep neural network?". In other words, maybe the nice, straight equity curve and excellent out-of-sample performance is just a fluke of this market and/or time period. To find out, I ran one final build. I started with the project file from the prior example and unchecked the box on the DNN Settings window for "Include DNN in strategies". All other settings were the same: the same data, build metrics, indicators, order types, and so on.

The equity curve for the top strategy based on the training segment from this build is shown below in Fig. 5. The out-of-sample results are quite poor, suggesting that the data from the training segment has been over-fit or, perhaps, that the out-of-sample segments are just too different from the training segment for the results to generalize. This strategy was not unique in the population; I scanned down the Build Results table and was unable to find a good strategy. I subsequently ran two other builds with the same settings in case the random nature of the build process was responsible for an unlucky outcome. The results were largely the same each time.

Typically, when poor results like this are found, there are several possible ways to proceed, such as adjusting the build metrics, using the stress testing option to overcome a possible over-fitting problem, changing the segment boundaries (in case they happen to be placed where the market dynamics have changed), among others. It's also possible that adding more data, either to the same symbol or by adding another symbol, could help improve the out-of-sample performance.

However, the point here is that none of that was necessary when the DNN was part of the strategy. In that case, excellent results were found with very little effort. Since the inclusion of the DNN was the only difference between the results in Fig. 3 and those in Fig. 5, it's reasonable to conclude that the DNN was responsible for the superior results.

Figure 5. Equity curve generated by evolving a strategy without including a DNN on 5-minute bars of ES with one contract per trade and $10 per round turn deducted for costs. Except for excluding the DNN, all settings are the same as for the results shown in Fig. 3.

Summary and Discussion

In part 1 of this series, the results from building stand-alone deep neural networks were promising, but it was not possible to conclude that DNNs would be beneficial as a component of trading strategies. In this article, I sought to demonstrate their utility by answering the three questions posed at the beginning of the article. While I limited my analysis to one price series, I think the results are convincing. The DNN's performance translated into good strategy results, the genetic build process was able to effectively utilize the DNN, and the DNN was clearly responsible for results that would be difficult, at best, to obtain without it.

As I noted in part 1, one of the surprising features of the DNNs is how well they hold up out-of-sample. This clearly translated into the trading strategy results shown in this article. Finding trading logic that generalizes well out-of-sample is rarely easy, which is why I'm committed to fully developing this feature for Adaptrade Builder. In fact, in my 30 years of developing trading strategies and trading software, I've never seen a technique or trading method that generalizes as well to out-of-sample data as these DNNs. They seem to have an innate ability to extract the signal from the market noise better than any other trading method.

Judging by the literature on large language models (LLMs), the reason for the good generalization abilities of DNNs is not fully known. However, it does occur to me that one factor may be that the prediction is trained (in the case of market prediction) on each bar of data. For example, for the 5-min ES data used in the examples presented here, there were about 47,000 bars of data in the training segment and so 47,000 predictions being made. Compare that to a typical trading strategy that may have a few hundred or perhaps a few thousand trades. The much larger number of predictions for the DNN may partially account for the better statistical results.

My plan is for this DNN feature to be part of Adaptrade Builder version 5. While the work has taken much longer than I expected or hoped, it's proceeding well. Nearly every part of Builder has been co-opted for what will be a dual-purpose program: building trading strategies and building and using DNNs. That has meant that nearly every part of the code base has required changes, resulting in the extended development schedule. I'll provide further details when available and will release version 5 as soon as possible.

Good luck with your trading.

Mike Bryant

Adaptrade Software

This article appeared in the November 2025 issue of the Adaptrade Software newsletter.

HYPOTHETICAL OR SIMULATED PERFORMANCE RESULTS HAVE CERTAIN INHERENT LIMITATIONS. UNLIKE AN ACTUAL PERFORMANCE RECORD, SIMULATED RESULTS DO NOT REPRESENT ACTUAL TRADING. ALSO, SINCE THE TRADES HAVE NOT ACTUALLY BEEN EXECUTED, THE RESULTS MAY HAVE UNDER- OR OVER-COMPENSATED FOR THE IMPACT, IF ANY, OF CERTAIN MARKET FACTORS, SUCH AS LACK OF LIQUIDITY. SIMULATED TRADING PROGRAMS IN GENERAL ARE ALSO SUBJECT TO THE FACT THAT THEY ARE DESIGNED WITH THE BENEFIT OF HINDSIGHT. NO REPRESENTATION IS BEING MADE THAT ANY ACCOUNT WILL OR IS LIKELY TO ACHIEVE PROFITS OR LOSSES SIMILAR TO THOSE SHOWN.